Network Access Policies

Kubernetes Network Policies controls traffic flow inside and across namespaces in the cluster. It allows you to explicitly control who are allowed to communicate with your service, and who your service is allowed to communicate with. The default policy in Gjensidige's clusters is to deny all incoming and outgoing traffic, creating a Zero Trust Networking Model where services are not trusted by default.

We are currently migrating from Kubernetes Network Policies to Cilium Network Policy. It is recommended to use Cilium Network Policy for new applications. For more details see Cilium Network Policy.

Default Network Policy

The following NetworkPolicy is applied to all team namespaces:

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: "namespace-default"

namespace: "<team-namespace>"

spec:

podSelector: {} # Match all pods in namespace

policyTypes:

- Egress

- Ingress

egress:

- to:

- ipBlock:

cidr: "0.0.0.0/0" # Allow egress out of the cluster

except: # Deny egress to pods inside the Kubernetes private network

- "10.0.0.0/8"

- "172.16.0.0/12"

- "192.168.0.0/16"

- namespaceSelector: {}

podSelector:

matchLabels:

k8s-app: "kube-dns" # Allow egress to kube-dns to be able to resolve dns names

ingress:

- from:

- namespaceSelector: # Allow ingress from NGINX

matchLabels:

name: "system-ingress"

podSelector:

matchLabels:

app-name: "ingress-nginx"

# Some ingress policies including common monitoring tools like Prometheus are left out for brevity

Overriding the default policy

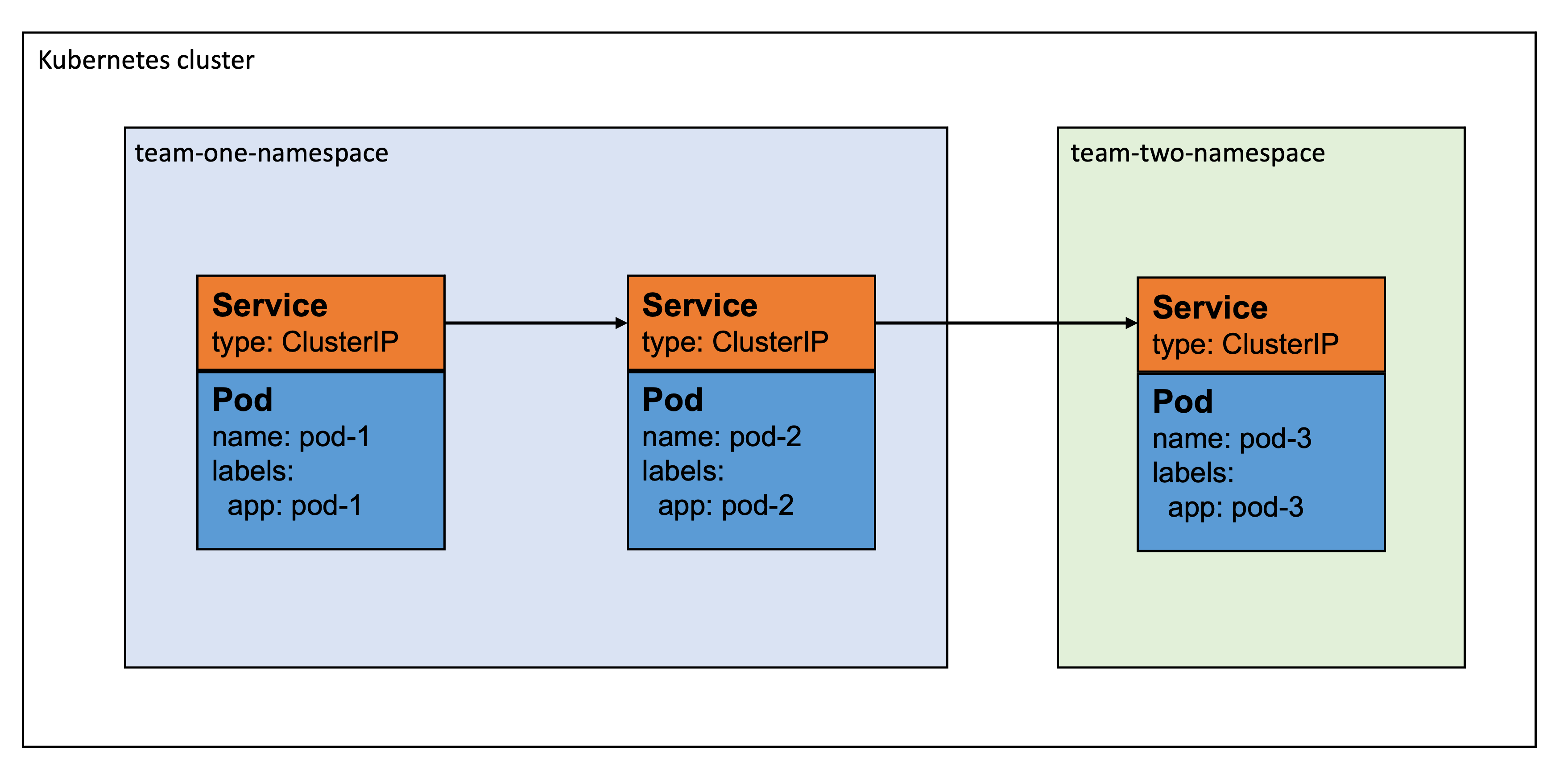

If your service needs to communicate with other services inside the cluster, you can add policies specific to selected pods on top of the default policy. Consider the following diagram:

In this example, "pod-1" wants to talk to "pod-2", and "pod-2" wants to talk to "pod-3". To allow this, we have to define a NetworkPolicy for each of the pods.

Let's start with "pod-1". This pod wishes to send traffic to "pod-2", but don't want to receive traffic from any other pod. Hence, we only need to specify a rule for egress traffic:

- yaml

- app-template-libsonnet

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: "pod-1-netpol"

namespace: "team-one-namespace"

labels:

app: "pod-1"

spec:

podSelector:

matchLabels:

app: "pod-1"

policyTypes:

- Egress

egress:

- to:

- podSelector:

matchLabels:

app: "pod-2"

k8s_networkpolicy+:: {

enabled: true,

egress_to: [

{

name: "pod-2"

}

],

},

Next is "pod-2" which wished to send traffic to "pod-3" and receive traffic from "pod-1". In this case we need to specify rules for both egress and traffic:

- yaml

- app-template-libsonnet

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: "pod-2-netpol"

namespace: "team-one-namespace"

labels:

app: "pod-2"

spec:

podSelector:

matchLabels:

app: "pod-2"

policyTypes:

- Egress

- Ingress

egress:

- to:

- namespaceSelector: # Required to specify 'namespaceSelector' if pod is in another namespace

matchLabels:

name: "team-two-namespace"

podSelector:

matchLabels:

app: "pod-3"

ingress:

- from:

- podSelector:

matchLabels:

app: "pod-1"

k8s_networkpolicy+:: {

enabled: true,

egress_to: [

{

namespace: "team-two-namespace",

name: "pod-3"

}

],

ingress_from: [

{

name: "pod-1"

}

]

},

Last is "pod-3" which wants to receive traffic from "pod-2" and don't send traffic to anyone. We only need a rule for ingress traffic in this case:

- yaml

- app-template-libsonnet

apiVersion: networking.k8s.io/v1

kind: NetworkPolicy

metadata:

name: "pod-3-netpol"

namespace: "team-two-namespace"

labels:

app: "pod-3"

spec:

podSelector:

matchLabels:

app: "pod-3"

policyTypes:

- Ingress

ingress:

- from:

- namespaceSelector: # Required to specify 'namespaceSelector' if pod is in another namespace

matchLabels:

name: "team-one-namespace"

podSelector:

matchLabels:

app: "pod-2"

k8s_networkpolicy+:: {

enabled: true,

ingress_from: [

{

namespace: "team-one-namespace",

name: "pod-2"

}

]

},

Having defined these NetworkPolicies for each pod, they should be able to communicate as shown in the diagram 🎉

Specifying podSelector.matchLabels when using Kustomize and commonLabels might have unexpected consequences as all occurrences of matchLabels will get the specified common labels appended. Using podSelector.matchExpressions might be a better solution in this case. Refer to this GitHub issue for more details.

Cilium Network Policy

The primary advantage of Cilium Network Policies is that Kubernetes (K8s) policies are Layer 3/4 only (IPs and ports), while Cilium extends this to Layer 7 (Application Layer) and utilizes identity-based security instead of purely IP-based rules. This, among other things, allows Cilium to support FQDN-based rules. You can simply write a policy to allow traffic to api.example.com, and Cilium handles the DNS resolution and IP mapping automatically.

During the migration from Kubernetes network policies to Cilium in our GAP clusters, we still use the namespace default network policy, but applications can start using and taking advantage of Cilium network policies by running them side-by-side with the namespace default policy.

The main Cilium policies are supported in GAP application specification, as shown in the examples below. It is also possible to use standalone definitions of kind: CiliumNetworkPolicy in your application manifest repositories. When using standalone CiliumNetworkPolicy, please remember to include openings towards DNS (kube-system.kube-dns and port), as shown in the example below.

To avoid conflicts, only one type of network policy should be used for a specific application at a time, either Kubernetes Network Policy or Cilium Network Policy

- yaml

- cilium-network-policy.yaml

apiVersion: gap.io/v1

kind: Application

metadata:

name: your-application

...

spec:

accessPolicy:

inbound:

rules:

- namespace: namespace-2

application: application-2

- namespace: namespace-3

application: application-3

outbound:

external:

- host: api.example.com

ports:

- port: 443

protocol: TCP

- cidr: 10.1.1.1/32

- cidr: 10.2.2.2/32

rules:

- namespace: namespace-2

application: application-2

- namespace: namespace-3

application: application-3

apiVersion: cilium.io/v2

kind: CiliumNetworkPolicy

...

spec:

egress:

- toEndpoints:

- matchLabels:

k8s:app: application-2

k8s:io.kubernetes.pod.namespace: namespace-2

- toEndpoints:

- matchLabels:

k8s:app: application-3

k8s:io.kubernetes.pod.namespace: namespace-3

- toFQDNs:

- matchName: api.example.com

toPorts:

- ports:

- port: '443'

protocol: TCP

- toCIDR:

- 10.1.1.1/32

- toCIDR:

- 10.2.2.2/32

- toEndpoints:

- matchLabels:

k8s:io.kubernetes.pod.namespace: kube-system

k8s:k8s-app: kube-dns

toPorts:

- ports:

- port: '53'

protocol: ANY

rules:

dns:

- matchPattern: '*'

endpointSelector:

matchLabels:

k8s:gap.io/name: your-application

ingress:

- fromEndpoints:

- matchLabels:

k8s:app: application-2

k8s:io.kubernetes.pod.namespace: namespace-2

- fromEndpoints:

- matchLabels:

k8s:app: application-3

k8s:io.kubernetes.pod.namespace: namespace-3